Testcase Best Practices

Writing Effective Testcases

Clear and Specific Descriptions

Write testcase descriptions that clearly state what is being tested and why. Avoid ambiguous language.

✅ Good: "Test the system's ability to support multiple network and application keys with proper key management"

❌ Poor: "Test key functionality"

Comprehensive Preconditions

Define all necessary setup conditions before test execution begins. Include system state, configurations, and dependencies.

Essential Preconditions:

- System power and connectivity status

- Required data or configurations

- User permissions and access levels

- Environmental conditions

Actionable Test Steps

Write steps as clear, executable commands that any tester can follow without additional context.

Step Structure:

- Use imperative verbs (Create, Verify, Configure, Test)

- Specify exact values and parameters

- Number steps sequentially

- Keep each step focused on a single action

Example:

- Create and store at least two network keys

- Implement key management protocols

- Verify keys are properly secured

- Generate audit logs

Measurable Outcomes

Define expected results with specific, verifiable criteria that eliminate ambiguity.

Outcome Characteristics:

- Quantifiable metrics (e.g., "maximum of 32 entries")

- Clear pass/fail conditions

- Specific system responses

- Observable behaviors

Organization and Structure

Consistent Naming Conventions

Use standardized testcase IDs that reflect hierarchy and traceability:

- Format:

TC_{PROJECT}_{REQUIREMENT}_{NUMBER} - Example:

TC_DBA_REQ-ALS-006_002

Logical Grouping

- Group testcases by functional area or component

- Maintain clear relationships to parent requirements

- Use levels to organize complexity (unit → integration → system)

Atomic Testcases

- Each testcase should validate one specific function or requirement

- Avoid combining multiple unrelated validations

- Keep testcases independent and executable in isolation

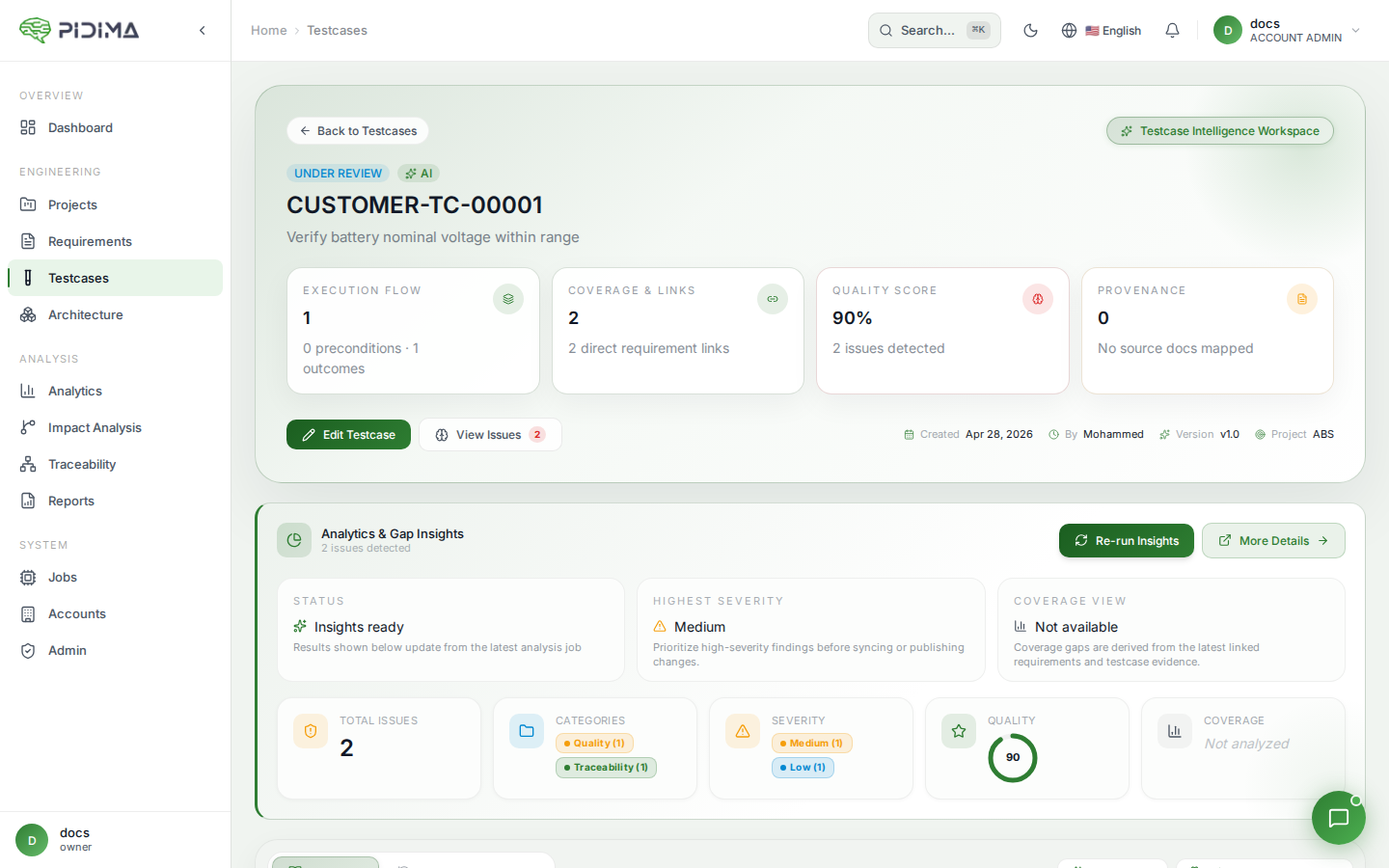

Leveraging Pidima's AI Capabilities

AI-Generated Testcases

- Review generated testcases for completeness and accuracy

- Customize AI outputs to match project-specific needs

- Validate that all requirement aspects are covered

Traceability

- Maintain clear links between requirements and testcases

- Use Pidima's atomic linking to track decomposition

- Ensure bidirectional traceability for compliance

Continuous Refinement

- Regularly review testcase effectiveness

- Update testcases when requirements change

- Use revision tracking to maintain history

Common Pitfalls to Avoid

| Pitfall | Impact | Solution |

|---|---|---|

| Vague descriptions | Inconsistent test execution | Use specific, measurable language |

| Missing preconditions | Test failures due to setup issues | Document all prerequisites |

| Combined test scenarios | Difficult debugging | Create atomic, focused testcases |

| Hardcoded values | Maintenance overhead | Use parameters where possible |

| No negative testing | Incomplete coverage | Include failure scenarios |

Integration with Development Lifecycle

- Early Creation: Generate testcases during requirement definition

- Continuous Updates: Refine as implementation progresses

- Execution Tracking: Document test results and defects

- Feedback Loop: Use test results to improve requirements

By following these best practices, teams can create maintainable, effective testcases that ensure comprehensive validation while leveraging Pidima's AI-powered automation to accelerate the testing process.